SF : Wikipedia in the Age of AI and Bots

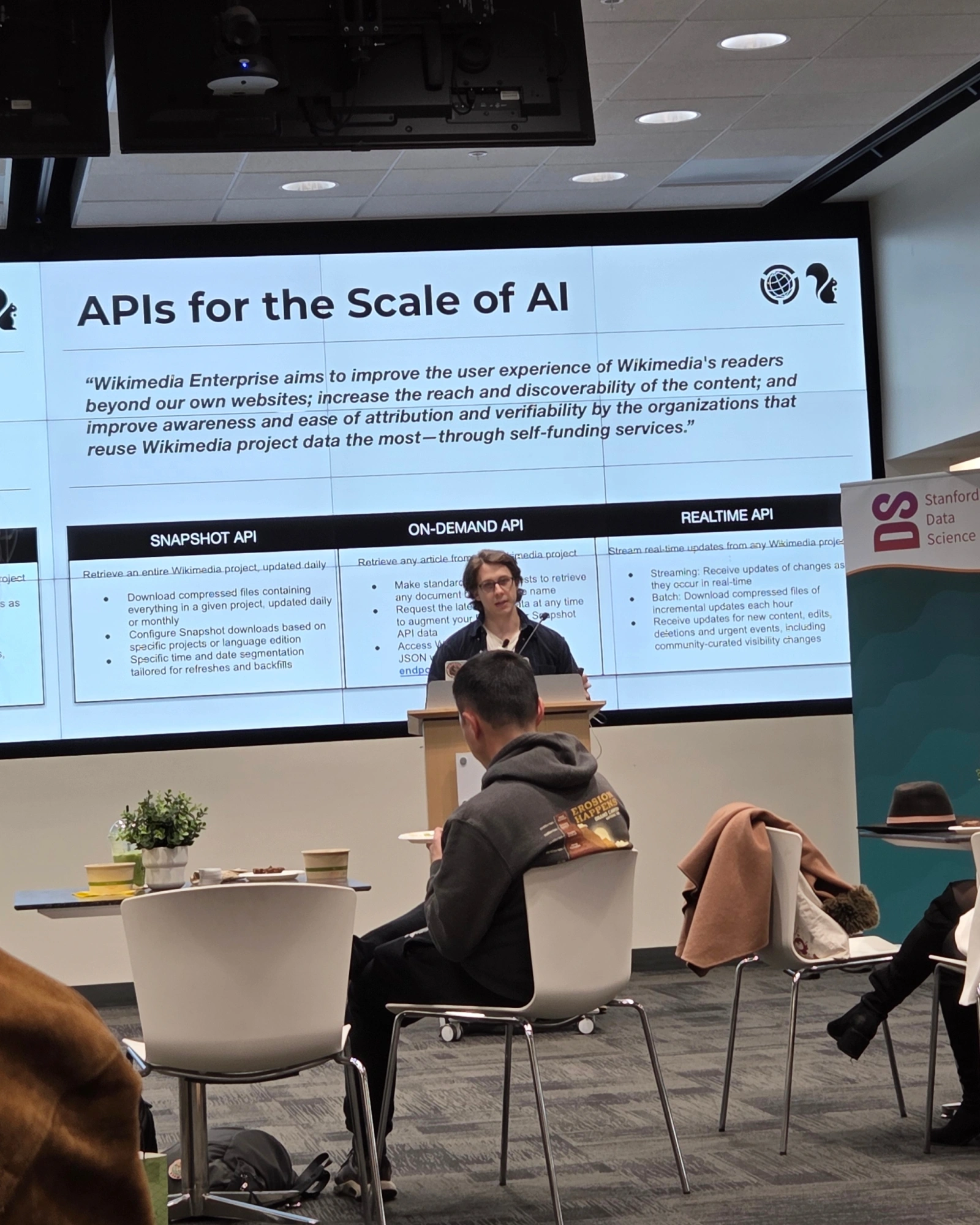

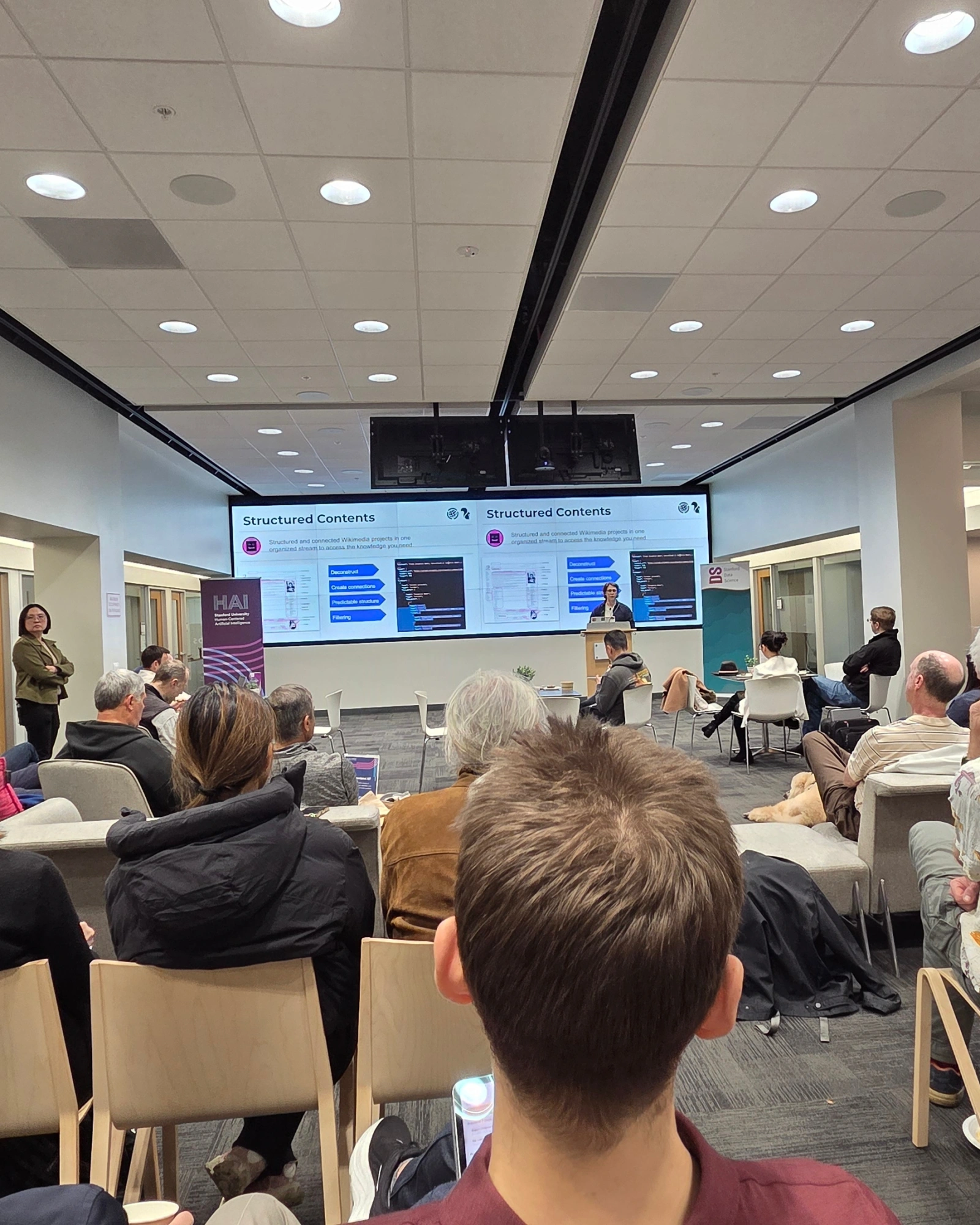

Drove down to Stanford HAI for Chris Petrillo's seminar on what is actually happening to Wikipedia in the LLM era. Petrillo runs product at Wikimedia Enterprise, and the framing was sober. Algorithmic bots have been part of Wikipedia's edit history since 2002, but the volume and behavior of automated traffic has changed sharply with the rise of large language models, to the point where scraping incidents are now a regular operational concern for the Foundation. Page views from humans are also dropping as readers consume AI generated summaries instead of clicking through, which puts pressure on the donation funded model that keeps the encyclopedia running. He walked through the editorial process behind the site, the AI specific tooling and datasets Wikimedia is now releasing, and the risk strategies the team uses to reduce server load and mitigate abuse without breaking general availability. The point that stuck most was the human loop. Wikipedia gets stronger as more humans participate, and most generative systems quietly depend on that loop staying healthy. If your training pipeline includes Wikipedia content, it is worth thinking about what you are giving back. There was real Q and A at the end on policy and how to engage with the broader Wikimedia movement. Strong reminder that the data layer underneath every model has people behind it, and that those people can be worn down.